Download Google Cloud Associate Data Practitioner.Associate-Data-Practitioner.ExamTopics.2026-04-09.98q.tqb

| Vendor: | |

| Exam Code: | Associate-Data-Practitioner |

| Exam Name: | Google Cloud Associate Data Practitioner |

| Date: | Apr 09, 2026 |

| File Size: | 488 KB |

How to open TQB files?

Files with TQB (Taurus Question Bank) extension can be opened by Taurus Exam Studio.

Purchase

Coupon: TAURUSSIM_20OFF

Discount: 20%

Demo Questions

Question 1

Your team wants to create a monthly report to analyze inventory data that is updated daily. You need to aggregate the inventory counts by using only the most recent month of data, and save the results to be used in a Looker Studio dashboard. What should you do?

- Create a materialized view in BigQuery that uses the SUM( ) function and the DATE_SUB( ) function.

- Create a saved query in the BigQuery console that uses the SUM( ) function and the DATE_SUB( ) function. Re-run the saved query every month, and save the results to a BigQuery table.

- Create a BigQuery table that uses the SUM( ) function and the _PARTITIONDATE filter.

- Create a BigQuery table that uses the SUM( ) function and the DATE_DIFF( ) function.

Correct answer: A

Question 2

You have a BigQuery dataset containing sales data. This data is actively queried for the first 6 months. After that, the data is not queried but needs to be retained for 3 years for compliance reasons. You need to implement a data management strategy that meets access and compliance requirements, while keeping cost and administrative overhead to a minimum. What should you do?

- Use BigQuery long-term storage for the entire dataset. Set up a Cloud Run function to delete the data from BigQuery after 3 years.

- Partition a BigQuery table by month. After 6 months, export the data to Coldline storage. Implement a lifecycle policy to delete the data from Cloud Storage after 3 years.

- Set up a scheduled query to export the data to Cloud Storage after 6 months. Write a stored procedure to delete the data from BigQuery after 3 years.

- Store all data in a single BigQuery table without partitioning or lifecycle policies.

Correct answer: B

Question 3

You have created a LookML model and dashboard that shows daily sales metrics for five regional managers to use. You want to ensure that the regional managers can only see sales metrics specific to their region. You need an easy-to-implement solution. What should you do?

- Create a sales_region user attribute, and assign each manager’s region as the value of their user attribute. Add an access_filter Explore filter on the region_name dimension by using the sales_region user attribute.

- Create five different Explores with the sql_always_filter Explore filter applied on the region_name dimension. Set each region_name value to the corresponding region for each manager.

- Create separate Looker dashboards for each regional manager. Set the default dashboard filter to the corresponding region for each manager.

- Create separate Looker instances for each regional manager. Copy the LookML model and dashboard to each instance. Provision viewer access to the corresponding manager.

Correct answer: A

Question 4

You need to design a data pipeline that ingests data from CSV, Avro, and Parquet files into Cloud Storage. The data includes raw user input. You need to remove all malicious SQL injections before storing the data in BigQuery. Which data manipulation methodology should you choose?

- EL

- ELT

- ETL

- ETLT

Correct answer: C

Question 5

Your retail organization stores sensitive application usage data in Cloud Storage. You need to encrypt the data without the operational overhead of managing encryption keys. What should you do?

- Use Google-managed encryption keys (GMEK).

- Use customer-managed encryption keys (CMEK).

- Use customer-supplied encryption keys (CSEK).

- Use customer-supplied encryption keys (CSEK) for the sensitive data and customer-managed encryption keys (CMEK) for the less sensitive data.

Correct answer: A

Question 6

You work for a financial organization that stores transaction data in BigQuery. Your organization has a regulatory requirement to retain data for a minimum of seven years for auditing purposes. You need to ensure that the data is retained for seven years using an efficient and cost-optimized approach. What should you do?

- Create a partition by transaction date, and set the partition expiration policy to seven years.

- Set the table-level retention policy in BigQuery to seven years.

- Set the dataset-level retention policy in BigQuery to seven years.

- Export the BigQuery tables to Cloud Storage daily, and enforce a lifecycle management policy that has a seven-year retention rule.

Correct answer: B

Question 7

You need to create a weekly aggregated sales report based on a large volume of data. You want to use Python to design an efficient process for generating this report. What should you do?

- Create a Cloud Run function that uses NumPy. Use Cloud Scheduler to schedule the function to run once a week.

- Create a Colab Enterprise notebook and use the bigframes.pandas library. Schedule the notebook to execute once a week.

- Create a Cloud Data Fusion and Wrangler flow. Schedule the flow to run once a week.

- Create a Dataflow directed acyclic graph (DAG) coded in Python. Use Cloud Scheduler to schedule the code to run once a week.

Correct answer: D

Question 8

Your organization has decided to move their on-premises Apache Spark-based workload to Google Cloud. You want to be able to manage the code without needing to provision and manage your own cluster. What should you do?

- Migrate the Spark jobs to Dataproc Serverless.

- Configure a Google Kubernetes Engine cluster with Spark operators, and deploy the Spark jobs.

- Migrate the Spark jobs to Dataproc on Google Kubernetes Engine.

- Migrate the Spark jobs to Dataproc on Compute Engine.

Correct answer: A

Question 9

You are developing a data ingestion pipeline to load small CSV files into BigQuery from Cloud Storage. You want to load these files upon arrival to minimize data latency. You want to accomplish this with minimal cost and maintenance. What should you do?

- Use the bq command-line tool within a Cloud Shell instance to load the data into BigQuery.

- Create a Cloud Composer pipeline to load new files from Cloud Storage to BigQuery and schedule it to run every 10 minutes.

- Create a Cloud Run function to load the data into BigQuery that is triggered when data arrives in Cloud Storage.

- Create a Dataproc cluster to pull CSV files from Cloud Storage, process them using Spark, and write the results to BigQuery.

Correct answer: C

Question 10

Your organization has a petabyte of application logs stored as Parquet files in Cloud Storage. You need to quickly perform a one-time SQL-based analysis of the files and join them to data that already resides in BigQuery. What should you do?

- Create a Dataproc cluster, and write a PySpark job to join the data from BigQuery to the files in Cloud Storage.

- Launch a Cloud Data Fusion environment, use plugins to connect to BigQuery and Cloud Storage, and use the SQL join operation to analyze the data.

- Create external tables over the files in Cloud Storage, and perform SQL joins to tables in BigQuery to analyze the data.

- Use the bq load command to load the Parquet files into BigQuery, and perform SQL joins to analyze the data.

Correct answer: C

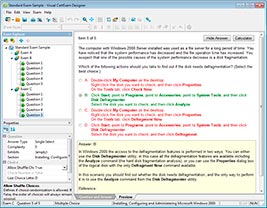

HOW TO OPEN VCE FILES

Use VCE Exam Simulator to open VCE files

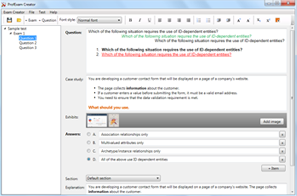

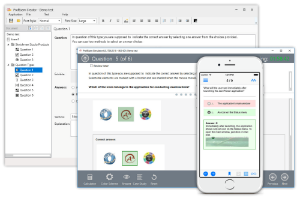

HOW TO OPEN VCEX FILES

Use ProfExam Simulator to open VCEX files

ProfExam at a 20% markdown

You have the opportunity to purchase ProfExam at a 20% reduced price

Get Now!