Download Professional Cloud Architect on Google Cloud Platform.Professional-Cloud-Architect.ExamTopics.2026-03-26.331q.tqb

| Vendor: | |

| Exam Code: | Professional-Cloud-Architect |

| Exam Name: | Professional Cloud Architect on Google Cloud Platform |

| Date: | Mar 26, 2026 |

| File Size: | 2 MB |

How to open TQB files?

Files with TQB (Taurus Question Bank) extension can be opened by Taurus Exam Studio.

Purchase

Coupon: TAURUSSIM_20OFF

Discount: 20%

Demo Questions

Question 1

Your company is a global financial services provider that processes and analyzes a high volume of credit card transactions in real time for fraud detection. Your analytics team must run complex batch queries on the same transaction data for daily reporting. You need to design a data processing solution that can handle both real-time and batch processing of the transaction data while minimizing operational overhead and infrastructure management. What should you do?

- Use Dataprep to ingest the transactions.

- Use Dataflow to process the streaming data.

- Use a Dataproc cluster for both the streaming and batch workloads.

- Use BigQuery for the batch analytics reports.

- Use Firestore to store and analyze the transaction data.

Correct answer: B, D

Question 2

Your company has hired an external auditing firm to perform a compliance audit. Your company’s governance policy requires that external auditors be managed in a single Google Group that is granted temporary, read-only access to a Cloud Storage bucket named audit-evidence-bucket. Access must be traceable to the individual auditor's identity and be active only for the duration of the audit engagement, which runs the entire month of October. You need a secure access control strategy that avoids administrative overhead and complies with your company's governance policy. What should you do?

- Apply an IAM policy binding that grants the roles/storage.objectViewer role to the Google Group. Configure this binding with a time-based IAM Condition that automatically grants access from October 1 to November 1.

- Create a service account, and grant it the roles/storage.objectViewer role on the bucket. Generate and share Signed URLs for each object in the bucket with an expiration date of November 1.

- Use Cloud Scheduler to run a Cloud Run functions script that adds the IAM binding of roles/storage.objectViewer to the Google Group on October 1 and another that removes the IAM binding on November 1.

- Use Workforce Identity Federation to map the auditors’ group to the Google Group. Bind the roles/storage.objectViewer role to this Google Group. Configure a 1-month session duration on the provider.

Correct answer: A

Question 3

Your employer is a financial services company that recently acquired a popular fintech startup. The startup's core application is a monolithic Python application running on a managed instance group of Compute Engine virtual machines with a single, large PostgreSQL database. Your development team struggles with slow deployment cycles, and the monolithic design of the startup's core application makes it difficult to integrate new. ML-powered fraud detection models. You need a long-term strategy that improves developer agility and positions the company to leverage Google Cloud's advanced data and AI capabilities for future innovations. What should you do?

- Deploy the ML fraud detection model to a Vertex AI endpoint. Create a REST API for the model and modify the monolithic Python application to call this endpoint for real-time fraud analysis.

- Containerize the application, deploy it to Google Kubernetes Engine (GKE), and migrate the PostgreSQL database to Cloud SQL for PostgreSQL.

- Propose a phased, event-driven migration to a microservices architecture. Use Pub/Sub for asynchronous communication and deploy the fraud models on Vertex AI endpoints.

- Migrate the PostgreSQL database to Cloud SQL for PostgreSQL. Replicate the data into BigQuery using Datastream, and then train and deploy the fraud detection models directly within BigQuery using BigQuery ML.

Correct answer: C

Question 4

A large, multinational corporation is migrating to Google Cloud. The company has several distinct business units: Finance, Marketing, and Research and Development (R&D). The central security team has mandated governance requirements for each business unit:

- Finance: Must be restricted to deploying resources only in specific, compliant regions (us-central1 and europe-west2). Access to their projects must be tightly controlled by a dedicated finance-admins group.

- Marketing: Needs separate environments for production and development, with different teams managing each environment.

- R&D: Requires maximum flexibility to experiment with new services but must be completely isolated to prevent any impact on production systems.

- Global Auditing: A central compliance team requires read-only access to view all resources across the entire company for auditing purposes.

You need to design a resource hierarchy that enforces these security policies at scale according to the Google Cloud Well-Architected Framework while providing the correct level of autonomy for each business unit. What should you do?

- Create a folder for each department under the root Organization node. Apply the resource location Organization Policy on the Finance folder. Within the Marketing folder, create separate projects for mktg-prod and mktg-dev. Grant the compliance team the roles/viewer role at the Organization level.

- Place all projects directly under the Organization node. Use network tags and service accounts to enforce security boundaries between the different department workloads. Apply the resource location Organization Policy on the Finance project.

- Create separate Google Cloud Organizations for each department (Finance, Marketing, and R&D). Grant the compliance team the roles/viewer role for each organization.

- Create a single project for each department. Apply the resource location policy directly to the Finance project. Grant the compliance team the roles/browser role on each project individually.

Correct answer: A

Question 5

You are designing a central, automated infrastructure deployment process for your organization using Terraform and Cloud Build. The security team prohibits the use of long-lived, static service account keys in any CI/CD pipeline. Additionally, while developers can propose infrastructure changes for peer review, they must not have permissions to directly apply changes in the production project. You need to design a secure and automated workflow for applying Terraform changes that meets the security team's requirements and ensures proper governance. What should you do?

- Configure the Cloud Build pipeline to use service account impersonation. Set up a trigger that automatically runs terraform apply when a pull request is merged.

- Use service account impersonation in Cloud Build. Configure the pipeline to run terraform plan on pull requests, and require manual approval before running terraform apply.

- Configure the pipeline to only run terraform plan. After a pull request is approved, have an authorized developer run terraform apply from a secured workstation.

- Create a privileged service account and store its JSON key in Secret Manager. Configure the Cloud Build pipeline to fetch this key during execution to authenticate Terraform.

Correct answer: B

Question 6

A financial services company is decommissioning one of its on-premises data centers. As part of this initiative, the company needs to perform a one-time migration of 500 ТВ of historical transaction archives to a Cloud Storage bucket for long-term retention. The data center’s internet egress is 1 Gbps, which is shared with critical business operations. You must complete the secure data transfer within a 60-day window to meet the decommissioning deadline. What should you do?

- Provision a Partner Interconnect connection with a 10 Gbps capacity to accelerate the data transfer, and then use Storage Transfer Service.

- Write a script that uses the gcloud storage cp --parallel command to upload the data in chunks over the public internet during off-peak hours.

- Use Storage Transfer Service to create an agent-based transfer job that moves the data from the on-premises file servers directly to the Cloud Storage bucket.

- Order a Transfer Appliance, copy the data to the appliance using your high-speed local network, and ship it back to Google to upload the data into your Cloud Storage bucket.

Correct answer: D

Question 7

Your company uses a custom-built application running on a Compute Engine virtual machine (VM). This application processes real-time sales data and writes it to a zonal Persistent Disk. A recent internal audit requires that you implement a backup and recovery plan to protect against zonal failures. Your company has a strict policy that all backup data must be retained for at least 90 days and stored in a separate project with limited access. You need to implement a fully automated backup solution that meets these requirements with minimal operational overhead. What should you do?

- Write a script to create daily backups of the Persistent Disk. Copy the backups to a different zone and apply a label to each snapshot to indicate the deletion date.

- Use gcloud commands to create snapshots of the Persistent Disk. Store the snapshots in a regional Cloud Storage bucket and configure a lifecycle rule to delete objects older than 90 days.

- Create a snapshot schedule to automatically create Persistent Disk snapshots and use a script to move and store them in a multi-regional Cloud Storage bucket.

- Use the Backup and Disaster Recovery (DR) service to create a backup plan. Configure the backup plan to take daily snapshots and store them in a backup vault with a 90-day retention policy.

Correct answer: D

Question 8

You are deploying a highly confidential data processing workload on Google Cloud. Your company’s compliance framework mandates that cryptographic keys used for encrypting data at rest must be generated and stored exclusively within a validated Hardware Security Module (HSM). You want to use a fully integrated Google Cloud managed service to handle the lifecycle and usage of these keys. What should you do?

- Use Customer-Supplied Encryption Keys (CSEK) by providing your on-premises generated key with each API request.

- Import your on-premises HSM key material into a Cloud KMS key with the SOFTWARE protection level.

- Create a new key in Cloud Key Management Service (Cloud KMS) with the HSM protection level.

- Configure Cloud External Key Manager (Cloud EKM) to connect to your on-premises HSM.

Correct answer: C

Question 9

Your organization uses Google Kubernetes Engine (GKE) and Amazon Elastic Kubernetes Service (EKS) to manage a complex Kubernetes environment across multiple cloud providers. You need to deploy a solution that streamlines configuration management, enforces security policies, and ensures consistent application deployment across all of the environments. You want to follow Google-recommended practices. What should you do?

- Leverage Argo CD for GitOps-based continuous delivery and Open Policy Agent (OPA) for policy enforcement, and develop a controller for multi-cluster configuration management.

- Deploy Crossplane for managing cloud resources as Kubernetes objects, FluxCD for GitOps-based configuration synchronization, and Kyverno for policy enforcement.

- Deploy Kustomize for configuration customization, Config Sync with multiple Git repositories, and a script to enforce security policies.

- Utilize Config Sync as part of GKE to synchronize configurations from a centralized repository, and utilize Policy Controller to enforce policies using OPA Gatekeeper.

Correct answer: D

Question 10

Your team plans to use Vertex AI to develop and deploy machine learning models for various use cases for fraud detection, product recommendations, and customer churn prediction. You want to enhance the security posture of the Vertex AI and Workbench environment by restricting data exfiltration. What should you do?

- Enable Private Google Access for the VPC network to allow Vertex AI services to access public Google services without traversing the public internet.

- Enable VPC Flow Logs to monitor network traffic to and from Vertex AI services and to identify suspicious activity.

- Create a service perimeter and include ml.googleapis.com and document.googleapis.com as protected services.

- Create a service perimeter and include aiplatform.googleapis.com and notebooks.googleapis.com as protected services.

Correct answer: D

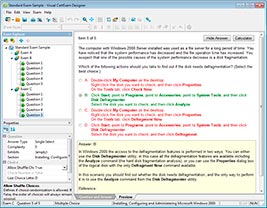

HOW TO OPEN VCE FILES

Use VCE Exam Simulator to open VCE files

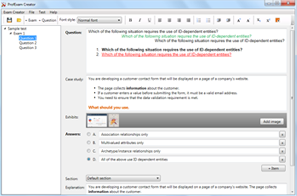

HOW TO OPEN VCEX FILES

Use ProfExam Simulator to open VCEX files

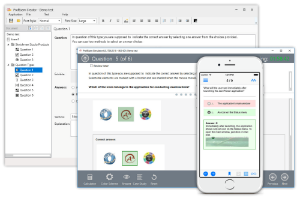

ProfExam at a 20% markdown

You have the opportunity to purchase ProfExam at a 20% reduced price

Get Now!