Download Professional Data Engineer on Google Cloud Platform.Professional-Data-Engineer.Dump4Pass.2024-10-31.68q.vcex

| Vendor: | |

| Exam Code: | Professional-Data-Engineer |

| Exam Name: | Professional Data Engineer on Google Cloud Platform |

| Date: | Oct 31, 2024 |

| File Size: | 437 KB |

How to open VCEX files?

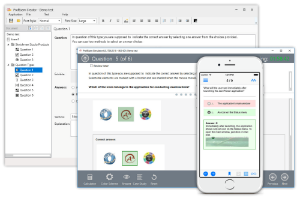

Files with VCEX extension can be opened by ProfExam Simulator.

Purchase

Coupon: TAURUSSIM_20OFF

Discount: 20%

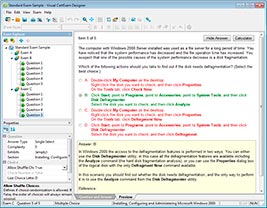

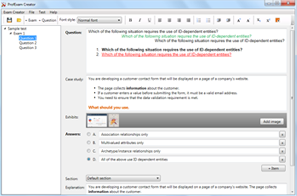

Demo Questions

Question 1

Your weather app queries a database every 15 minutes to get the current temperature. The frontend is powered by Google App Engine and server millions of users. How should you design the frontend to respond to a database failure?

- Issue a command to restart the database servers.

- Retry the query with exponential backoff, up to a cap of 15 minutes.

- Retry the query every second until it comes back online to minimize staleness of data.

- Reduce the query frequency to once every hour until the database comes back online.

Correct answer: B

Question 2

You want to process payment transactions in a point-of-sale application that will run on Google Cloud Platform. Your user base could grow exponentially, but you do not want to manage infrastructure scaling.

Which Google database service should you use?

- Cloud SQL

- BigQuery

- Cloud Bigtable

- Cloud Datastore

Correct answer: D

Question 3

You need to store and analyze social media postings in Google BigQuery at a rate of 10,000 messages per minute in near real-time. Initially, design the application to use streaming inserts for individual postings. Your application also performs data aggregations right after the streaming inserts. You discover that the queries after streaming inserts do not exhibit strong consistency, and reports from the queries might miss in-flight data. How can you adjust your application design?

- Re-write the application to load accumulated data every 2 minutes.

- Convert the streaming insert code to batch load for individual messages.

- Load the original message to Google Cloud SQL, and export the table every hour to BigQuery via streaming inserts.

- Estimate the average latency for data availability after streaming inserts, and always run queries after waiting twice as long.

Correct answer: D

Question 4

Business owners at your company have given you a database of bank transactions. Each row contains the user ID, transaction type, transaction location, and transaction amount. They ask you to investigate what type of machine learning can be applied to the data. Which three machine learning applications can you use?

(Choose three.)

- Supervised learning to determine which transactions are most likely to be fraudulent.

- Unsupervised learning to determine which transactions are most likely to be fraudulent.

- Clustering to divide the transactions into N categories based on feature similarity.

- Supervised learning to predict the location of a transaction.

- Reinforcement learning to predict the location of a transaction.

- Unsupervised learning to predict the location of a transaction.

Correct answer: BCD

Question 5

Your company uses a proprietary system to send inventory data every 6 hours to a data ingestion service in the cloud. Transmitted data includes a payload of several fields and the timestamp of the transmission. If there are any concerns about a transmission, the system re-transmits the data. How should you deduplicate the data most efficiency?

- Assign global unique identifiers (GUID) to each data entry.

- Compute the hash value of each data entry, and compare it with all historical data.

- Store each data entry as the primary key in a separate database and apply an index.

- Maintain a database table to store the hash value and other metadata for each data entry.

Correct answer: A

Question 6

Your company has hired a new data scientist who wants to perform complicated analyses across very large datasets stored in Google Cloud Storage and in a Cassandra cluster on Google Compute Engine. The scientist primarily wants to create labelled data sets for machine learning projects, along with some visualization tasks. She reports that her laptop is not powerful enough to perform her tasks and it is slowing her down. You want to help her perform her tasks. What should you do?

- Run a local version of Jupiter on the laptop.

- Grant the user access to Google Cloud Shell.

- Host a visualization tool on a VM on Google Compute Engine.

- Deploy Google Cloud Datalab to a virtual machine (VM) on Google Compute Engine.

Correct answer: D

Question 7

Flowlogistic Case Study

Company Overview

Flowlogistic is a leading logistics and supply chain provider. They help businesses throughout the world manage their resources and transport them to their final destination. The company has grown rapidly, expanding their offerings to include rail, truck, aircraft, and oceanic shipping.

Company Background

The company started as a regional trucking company, and then expanded into other logistics market. Because , Latest Certifications uestions nsers

they have not updated their infrastructure, managing and tracking orders and shipments has become a bottleneck. To improve operations, Flowlogistic developed proprietary technology for tracking shipments in real time at the parcel level. However, they are unable to deploy it because their technology stack, based on Apache Kafka, cannot support the processing volume. In addition, Flowlogistic wants to further analyze their orders and shipments to determine how best to deploy their resources.

Solution Concept

Flowlogistic wants to implement two concepts using the cloud:

- Use their proprietary technology in a real-time inventory-tracking system that indicates the location of their loads

- Perform analytics on all their orders and shipment logs, which contain both structured and unstructured data, to determine how best to deploy resources, which markets to expand info.

- They also want to use predictive analytics to learn earlier when a shipment will be delayed.

Existing Technical Environment

Flowlogistic architecture resides in a single data center:

- Databases 8 physical servers in 2 clusters

- SQL Server – user data, inventory, static data 3 physical servers

- Cassandra – metadata, tracking messages 10 Kafka servers – tracking message aggregation and batch insert

- Application servers – customer front end, middleware for order/customs 60 virtual machines across 20 physical servers

- Tomcat – Java services

- Nginx – static content

- Batch servers

- Storage appliances

- iSCSI for virtual machine (VM) hosts

- Fibre Channel storage area network (FC SAN) – SQL server storage

- Network-attached storage (NAS) image storage, logs, backups

- 10 Apache Hadoop /Spark servers

- Core Data Lake

- Data analysis workloads

- 20 miscellaneous servers

- Jenkins, monitoring, bastion hosts,

Business Requirements

- Build a reliable and reproducible environment with scaled panty of production.

- Aggregate data in a centralized Data Lake for analysis

- Use historical data to perform predictive analytics on future shipments

- Accurately track every shipment worldwide using proprietary technology

- Improve business agility and speed of innovation through rapid provisioning of new resources

- Analyze and optimize architecture for performance in the cloud

- Migrate fully to the cloud if all other requirements are met

Technical Requirements

- Handle both streaming and batch data

- Migrate existing Hadoop workloads

- Ensure architecture is scalable and elastic to meet the changing demands of the company.

- Use managed services whenever possible

- Encrypt data flight and at rest

- Connect a VPN between the production data center and cloud environment

SEO Statement

We have grown so quickly that our inability to upgrade our infrastructure is really hampering further growth and efficiency. We are efficient at moving shipments around the world, but we are inefficient at moving data around.

We need to organize our information so we can more easily understand where our customers are and what they are shipping.

CTO Statement

IT has never been a priority for us, so as our data has grown, we have not invested enough in our technology. I have a good staff to manage IT, but they are so busy managing our infrastructure that I cannot get them to do the things that really matter, such as organizing our data, building the analytics, and figuring out how to implement the CFO’ s tracking technology.

CFO Statement

Part of our competitive advantage is that we penalize ourselves for late shipments and deliveries. Knowing where out shipments are at all times has a direct correlation to our bottom line and profitability. Additionally, I don’t want to commit capital to building out a server environment.

Flowlogistic’s management has determined that the current Apache Kafka servers cannot handle the data volume for their real-time inventory tracking system. You need to build a new system on Google Cloud Platform (GCP) that will feed the proprietary tracking software. The system must be able to ingest data from a variety of global sources, process and query in real-time, and store the data reliably. Which combination of GCP products should you choose?

- Cloud Pub/Sub, Cloud Dataflow, and Cloud Storage

- Cloud Pub/Sub, Cloud Dataflow, and Local SSD

- Cloud Pub/Sub, Cloud SQL, and Cloud Storage

- Cloud Load Balancing, Cloud Dataflow, and Cloud Storage

Correct answer: A

Question 8

MJTelco Case Study

Company Overview

MJTelco is a startup that plans to build networks in rapidly growing, underserved markets around the world.

The company has patents for innovative optical communications hardware. Based on these patents, they can create many reliable, high-speed backbone links with inexpensive hardware.

Company Background

Founded by experienced telecom executives, MJTelco uses technologies originally developed to overcome communications challenges in space. Fundamental to their operation, they need to create a distributed data infrastructure that drives real-time analysis and incorporates machine learning to continuously optimize their topologies. Because their hardware is inexpensive, they plan to overdeploy the network allowing them to account for the impact of dynamic regional politics on location availability and cost.

Their management and operations teams are situated all around the globe creating many-to-many relationship between data consumers and provides in their system. After careful consideration, they decided public cloud is the perfect environment to support their needs.

Solution Concept

MJTelco is running a successful proof-of-concept (PoC) project in its labs. They have two primary needs:

- Scale and harden their PoC to support significantly more data flows generated when they ramp to more than 50,000 installations.

- Refine their machine-learning cycles to verify and improve the dynamic models they use to control topology definition.

MJTelco will also use three separate operating environments – development/test, staging, and production – to meet the needs of running experiments, deploying new features, and serving production customers.

Business Requirements

- Scale up their production environment with minimal cost, instantiating resources when and where needed in an unpredictable, distributed telecom user community.

- Ensure security of their proprietary data to protect their leading-edge machine learning and analysis.

- Provide reliable and timely access to data for analysis from distributed research workers

- Maintain isolated environments that support rapid iteration of their machine-learning models without affecting their customers.

Technical Requirements

- Ensure secure and efficient transport and storage of telemetry data

- Rapidly scale instances to support between 10,000 and 100,000 data providers with multiple flows each.

- Allow analysis and presentation against data tables tracking up to 2 years of data storing approximately 100m records/day

- Support rapid iteration of monitoring infrastructure focused on awareness of data pipeline problems both in telemetry flows and in production learning cycles.

CEO Statement

Our business model relies on our patents, analytics and dynamic machine learning. Our inexpensive hardware is organized to be highly reliable, which gives us cost advantages. We need to quickly stabilize our large distributed data pipelines to meet our reliability and capacity commitments.

CTO Statement

Our public cloud services must operate as advertised. We need resources that scale and keep our data secure. We also need environments in which our data scientists can carefully study and quickly adapt our models. Because we rely on automation to process our data, we also need our development and test environments to work as we iterate.

CFO Statement

The project is too large for us to maintain the hardware and software required for the data and analysis. Also, we cannot afford to staff an operations team to monitor so many data feeds, so we will rely on automation and infrastructure. Google Cloud’s machine learning will allow our quantitative researchers to work on our high-value problems instead of problems with our data pipelines.

You create a new report for your large team in Google Data Studio 360. The report uses Google BigQuery as its data source. It is company policy to ensure employees can view only the data associated with their region, so you create and populate a table for each region. You need to enforce the regional access policy to the data.

Which two actions should you take? (Choose two.)

- Ensure all the tables are included in global dataset.

- Ensure each table is included in a dataset for a region.

- Adjust the settings for each table to allow a related region-based security group view access.

- Adjust the settings for each view to allow a related region-based security group view access.

- Adjust the settings for each dataset to allow a related region-based security group view access.

Correct answer: BE

Question 9

You are deploying a new storage system for your mobile application, which is a media streaming service. You decide the best fit is Google Cloud Datastore. You have entities with multiple properties, some of which can take on multiple values. For example, in the entity ‘Movie’ the property ‘actors’ and the property ‘tags’ have multiple values but the property ‘date released’ does not. A typical query would ask for all movies with actor=<actorname> ordered by date_released or all movies with tag=Comedy ordered by date_released. How should you avoid a combinatorial explosion in the number of indexes?

- Manually configure the index in your index config as follows:

- Manually configure the index in your index config as follows:

- Set the following in your entity options: exclude_from_indexes = ‘actors, tags’

- Set the following in your entity options: exclude_from_indexes = ‘date_published’

Correct answer: A

Question 10

You are implementing security best practices on your data pipeline. Currently, you are manually executing jobs as the Project Owner. You want to automate these jobs by taking nightly batch files containing non-public information from Google Cloud Storage, processing them with a Spark Scala job on a Google Cloud Dataproc cluster, and depositing the results into Google BigQuery.

How should you securely run this workload?

- Restrict the Google Cloud Storage bucket so only you can see the files.

- Grant the Project Owner role to a service account, and run the job with it

- Use a service account with the ability to read the batch files and to write to BigQuery

- Use a user account with the Project Viewer role on the Cloud Dataproc cluster to read the batch files and write to BigQuery

Correct answer: C

HOW TO OPEN VCE FILES

Use VCE Exam Simulator to open VCE files

HOW TO OPEN VCEX FILES

Use ProfExam Simulator to open VCEX files

ProfExam at a 20% markdown

You have the opportunity to purchase ProfExam at a 20% reduced price

Get Now!