Download Professional Data Engineer on Google Cloud Platform.Professional-Data-Engineer.Dump4Pass.2024-11-20.142q.vcex

| Vendor: | |

| Exam Code: | Professional-Data-Engineer |

| Exam Name: | Professional Data Engineer on Google Cloud Platform |

| Date: | Nov 20, 2024 |

| File Size: | 607 KB |

How to open VCEX files?

Files with VCEX extension can be opened by ProfExam Simulator.

Purchase

Coupon: TAURUSSIM_20OFF

Discount: 20%

Demo Questions

Question 1

You create an important report for your large team in Google Data Studio 360. The report uses Google BigQuery as its data source. You notice that visualizations are not showing data that is less than 1 hour old.

What should you do?

- Disable caching by editing the report settings.

- Disable caching in BigQuery by editing table details.

- Refresh your browser tab showing the visualizations.

- Clear your browser history for the past hour then reload the tab showing the virtualizations.

Correct answer: A

Explanation:

Reference: https://support.google.com/datastudio/answer/7020039?hl=en Reference: https://support.google.com/datastudio/answer/7020039?hl=en

Question 2

Your weather app queries a database every 15 minutes to get the current temperature. The frontend is powered by Google App Engine and server millions of users. How should you design the frontend to respond to a database failure?

- Issue a command to restart the database servers.

- Retry the query with exponential backoff, up to a cap of 15 minutes.

- Retry the query every second until it comes back online to minimize staleness of data.

- Reduce the query frequency to once every hour until the database comes back online.

Correct answer: B

Question 3

Your company handles data processing for a number of different clients. Each client prefers to use their own suite of analytics tools, with some allowing direct query access via Google BigQuery. You need to secure the data so that clients cannot see each other’s data. You want to ensure appropriate access to the data. Which three steps should you take? (Choose three.)

- Load data into different partitions.

- Load data into a different dataset for each client.

- Put each client’s BigQuery dataset into a different table.

- Restrict a client’s dataset to approved users.

- Only allow a service account to access the datasets.

- Use the appropriate identity and access management (IAM) roles for each client’s users.

Correct answer: BDF

Question 4

You want to process payment transactions in a point-of-sale application that will run on Google Cloud Platform. Your user base could grow exponentially, but you do not want to manage infrastructure scaling.

Which Google database service should you use?

- Cloud SQL

- BigQuery

- Cloud Bigtable

- Cloud Datastore

Correct answer: D

Question 5

You need to store and analyze social media postings in Google BigQuery at a rate of 10,000 messages per minute in near real-time. Initially, design the application to use streaming inserts for individual postings. Your application also performs data aggregations right after the streaming inserts. You discover that the queries after streaming inserts do not exhibit strong consistency, and reports from the queries might miss in-flight data. How can you adjust your application design?

- Re-write the application to load accumulated data every 2 minutes.

- Convert the streaming insert code to batch load for individual messages.

- Load the original message to Google Cloud SQL, and export the table every hour to BigQuery via streaming inserts.

- Estimate the average latency for data availability after streaming inserts, and always run queries after waiting twice as long.

Correct answer: D

Question 6

Your company is migrating their 30-node Apache Hadoop cluster to the cloud. They want to re-use Hadoop jobs they have already created and minimize the management of the cluster as much as possible. They also want to be able to persist data beyond the life of the cluster. What should you do?

- Create a Google Cloud Dataflow job to process the data.

- Create a Google Cloud Dataproc cluster that uses persistent disks for HDFS.

- Create a Hadoop cluster on Google Compute Engine that uses persistent disks.

- Create a Cloud Dataproc cluster that uses the Google Cloud Storage connector.

- Create a Hadoop cluster on Google Compute Engine that uses Local SSD disks.

Correct answer: D

Question 7

Business owners at your company have given you a database of bank transactions. Each row contains the user ID, transaction type, transaction location, and transaction amount. They ask you to investigate what type of machine learning can be applied to the data. Which three machine learning applications can you use?

(Choose three.)

- Supervised learning to determine which transactions are most likely to be fraudulent.

- Unsupervised learning to determine which transactions are most likely to be fraudulent.

- Clustering to divide the transactions into N categories based on feature similarity.

- Supervised learning to predict the location of a transaction.

- Reinforcement learning to predict the location of a transaction.

- Unsupervised learning to predict the location of a transaction.

Correct answer: BCD

Question 8

You work for a car manufacturer and have set up a data pipeline using Google Cloud Pub/Sub to capture anomalous sensor events. You are using a push subscription in Cloud Pub/Sub that calls a custom HTTPS endpoint that you have created to take action of these anomalous events as they occur. Your custom HTTPS endpoint keeps getting an inordinate amount of duplicate messages. What is the most likely cause of these duplicate messages?

- The message body for the sensor event is too large.

- Your custom endpoint has an out-of-date SSL certificate.

- The Cloud Pub/Sub topic has too many messages published to it.

- Your custom endpoint is not acknowledging messages within the acknowledgement deadline.

Correct answer: D

Question 9

Your company uses a proprietary system to send inventory data every 6 hours to a data ingestion service in the cloud. Transmitted data includes a payload of several fields and the timestamp of the transmission. If there are any concerns about a transmission, the system re-transmits the data. How should you deduplicate the data most efficiency?

- Assign global unique identifiers (GUID) to each data entry.

- Compute the hash value of each data entry, and compare it with all historical data.

- Store each data entry as the primary key in a separate database and apply an index.

- Maintain a database table to store the hash value and other metadata for each data entry.

Correct answer: A

Question 10

Your company has hired a new data scientist who wants to perform complicated analyses across very large datasets stored in Google Cloud Storage and in a Cassandra cluster on Google Compute Engine. The scientist primarily wants to create labelled data sets for machine learning projects, along with some visualization tasks. She reports that her laptop is not powerful enough to perform her tasks and it is slowing her down. You want to help her perform her tasks. What should you do?

- Run a local version of Jupiter on the laptop.

- Grant the user access to Google Cloud Shell.

- Host a visualization tool on a VM on Google Compute Engine.

- Deploy Google Cloud Datalab to a virtual machine (VM) on Google Compute Engine.

Correct answer: D

HOW TO OPEN VCE FILES

Use VCE Exam Simulator to open VCE files

HOW TO OPEN VCEX FILES

Use ProfExam Simulator to open VCEX files

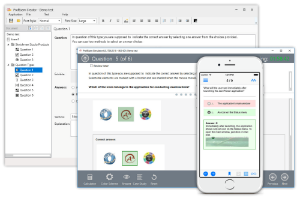

ProfExam at a 20% markdown

You have the opportunity to purchase ProfExam at a 20% reduced price

Get Now!