Download Designing and Implementing a Data Science Solution on Azure.DP-100.ExamTopics.2026-03-30.191q.tqb

| Vendor: | Microsoft |

| Exam Code: | DP-100 |

| Exam Name: | Designing and Implementing a Data Science Solution on Azure |

| Date: | Mar 30, 2026 |

| File Size: | 10 MB |

How to open TQB files?

Files with TQB (Taurus Question Bank) extension can be opened by Taurus Exam Studio.

Purchase

Coupon: TAURUSSIM_20OFF

Discount: 20%

Demo Questions

Question 1

You are designing an Azure Machine Learning solution.

The model must be trained by using automated machine learning. The compute must be a shared resource with users in the Azure Machine Learning workspace. After you train the model, it must be deployed for batch scoring on a serverless compute.

You need to select the appropriate computation options for the solution.

Which compute options should you select for training and deployment? To answer, move the appropriate compute options to the correct project activities. You may use each compute option once, more than once, or not at all. You may need to move the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

Correct answer: To work with this question, an Exam Simulator is required.

Question 2

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You have an Azure Machine Learning workspace that includes an AmlCompute cluster and a batch endpoint.

You clone a repository that contains an MLflow model to your local computer.

You need to ensure that you can deploy the model to the batch endpoint.

Solution: Create a datastore in the workspace.

Does the solution meet the goal?

- Yes

- No

Correct answer: B

Question 3

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You manage an Azure Machine Learning workspace. The Python script named script.py reads an argument named training_data. The training_data argument specifies the path to the training data in a file named dataset1.csv.

You plan to run the script.py Python script as a command job that trains a machine learning model.

You need to provide the command to pass the path for the dataset as a parameter value when you submit the script as a training job.

Solution: python train.py --training_data training_data

Does the solution meet the goal?

- Yes

- No

Correct answer: B

Question 4

You have an Azure Machine Learning workspace.

You plan to use the terminal to configure a compute instance to run a notebook.

You need to add a new R kernel to the compute instance.

In which order should you perform the actions? To answer, move all actions from the list of actions to the answer area and arrange them in the correct order.

Correct answer: To work with this question, an Exam Simulator is required.

Question 5

You manage an Azure Machine Learning workspace. You design a training job that is configured with a serverless compute.

The serverless compute must have a specific instance type and count.

You need to configure the serverless compute by using Azure Machine Learning Python SDK v2.

What should you do?

- Specify the compute name by using the compute parameter of the command job.

- Configure the tier parameter to Dedicated VM.

- Initialize and specify the ResourceConfiguration class.

- Initialize AmiCompute class with size and type specification.

Correct answer: C

Question 6

You manage an Azure Machine Learning workspace.

You plan to import and wrangle data stored in Azure Data Lake Storage Gen2 with Apache Spark.

You need to start interactive data wrangling with the user identity passthrough.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Correct answer: To work with this question, an Exam Simulator is required.

Question 7

You have an Azure Machine Learning workspace.

You plan to use the workspace to set up automated machine learning training for an image classification model.

You need to choose the primary metric to optimize the model training.

Which primary metric should you choose?

- r2_score

- mean_absolute_error

- iou

- median_absolute_error

Correct answer: C

Question 8

You have an Azure Machine Learning workspace named WS1.

You plan to use Azure Machine Learning SDK v2 to register a model as an asset in WS1 from an artifact generated by an MLflow run. The artifact resides in a named output of a job used for the model training.

You need to identify the syntax of the path to reference the model when you register it.

Which syntax should you use?

- t//model/

- azureml://registries

- mlflow-model/

- azureml://jobs/

Correct answer: D

Question 9

You are a data scientist working for a hotel booking website company. You use the Azure Machine Learning service to train a model that identifies fraudulent transactions.

You must deploy the model as an Azure Machine Learning online endpoint by using the Azure Machine Learning Python SDK v2. The deployed model must return real-time predictions of fraud based on transaction data input.

You need to create the script that is specified as the scoring_script parameter for the CodeConfiguration class used to deploy the model.

What should the entry script do?

- Register the model with appropriate tags and properties.

- Create a Conda environment for the online endpoint compute and install the necessary Python packages.

- Load the model and use it to predict labels from input data.

- Start a node on the inference cluster where the model is deployed.

- Specify the number of cores and the amount of memory required for the online endpoint compute.

Correct answer: C

Question 10

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You have an Azure Machine Learning workspace. You connect to a terminal session from the Notebooks page in Azure Machine Learning studio.

You plan to add a new Jupyter kernel that will be accessible from the same terminal session.

You need to perform the task that must be completed before you can add the new kernel.

Solution: Create a compute instance.

Does the solution meet the goal?

- Yes

- No

Correct answer: B

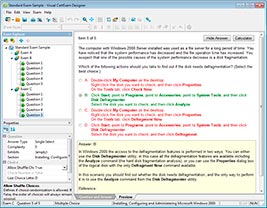

HOW TO OPEN VCE FILES

Use VCE Exam Simulator to open VCE files

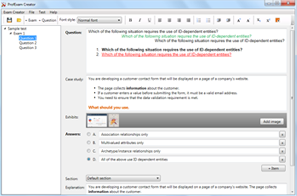

HOW TO OPEN VCEX FILES

Use ProfExam Simulator to open VCEX files

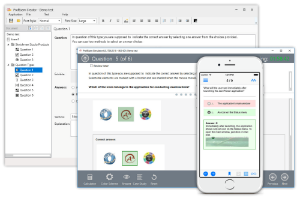

ProfExam at a 20% markdown

You have the opportunity to purchase ProfExam at a 20% reduced price

Get Now!