Download VMware Cloud Foundation 5.2 Architect.2V0-13.24.Actual4Test.2026-04-09.42q.tqb

| Vendor: | VMware |

| Exam Code: | 2V0-13.24 |

| Exam Name: | VMware Cloud Foundation 5.2 Architect |

| Date: | Apr 09, 2026 |

| File Size: | 223 KB |

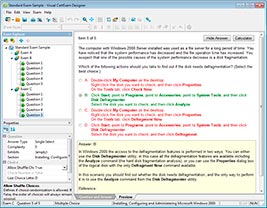

How to open TQB files?

Files with TQB (Taurus Question Bank) extension can be opened by Taurus Exam Studio.

Purchase

Coupon: TAURUSSIM_20OFF

Discount: 20%

Demo Questions

Question 1

Given a scenario, which design decision is necessary to ensure smooth workload migration into a VMware Cloud Foundation (VCF) environment?

Response:

- Ensuring that workloads are isolated in separate clusters

- Using VMware vSphere replication for seamless workload migration

- Configuring NSX to enable micro-segmentation of migrated workloads

- Setting up a shared storage infrastructure across all availability zones

Correct answer: B

Question 2

An architect was requested to recommend a solution for migrating 5000 VMs from an existing vSphere environment to a new VMware Cloud Foundation infrastructure. Which feature or tool can be recommended by the architect to minimize downtime and automate the process?

- VMware HCX

- vSphere vMotion

- VMware Converter

- Cross vCenter vMotion

Correct answer: A

Explanation:

When migrating 5000 virtual machines (VMs) from an existing vSphere environment to a new VMware Cloud Foundation (VCF) 5.2 infrastructure, the primary goals are to minimize downtime and automate the process as much as possible. VMware Cloud Foundation 5.2 is a full-stack hyper-converged infrastructure (HCI) solution that integrates vSphere, vSAN, NSX, and Aria Suite for a unified private cloud experience.Given the scale of the migration (5000 VMs) and the requirement to transition from an existing vSphere environment to a new VCF infrastructure, the architect must select a tool that supports large-scale migrations, minimizes downtime, and provides automation capabilities across potentially different environments or data centers.Let's evaluate each option in detail:A: VMware HCX:VMware HCX (Hybrid Cloud Extension) is an application mobility platform designed specifically for large-scale workload migrations between vSphere environments, including migrations to VMware Cloud Foundation. HCX is included in VCF Enterprise Edition and provides advanced features such as zero-downtime live migration, bulk migration, and network extension. It automates the creation of hybrid interconnects between source and destination environments, enabling seamless VM mobility without requiring IP address changes (via Layer 2 network extension). HCX supports migrations from older vSphere versions (as early as vSphere 5.1) to the latest versions included in VCF 5.2, making it ideal for brownfield-to- greenfield transitions. For a migration of 5000 VMs, HCX's ability to perform bulk migrations (hundreds of VMs simultaneously) and its high-availability features (e.g., redundant appliances) ensure minimal disruption and efficient automation. HCX also integrates with VCF's SDDC Manager, aligning with the centralized management paradigm of VCF 5.2.B: vSphere vMotion:vSphere vMotion enables live migration of running VMs from one ESXi host to another within the same vCenter Server instance with zero downtime. While this is an excellent tool for migrations within a single data center or vCenter environment, it is limited to hosts managed by the same vCenter Server.Migrating VMs to a new VCF infrastructure typically involves a separate vCenter instance (e.g., a new management domain in VCF), which vMotion alone cannot handle. For 5000 VMs, vMotion would require manual intervention for each VM and would not scale efficiently across different environments or data centers, making it unsuitable as the primary tool for this scenario.C: VMware Converter:VMware Converter is a tool designed to convert physical machines or other virtual formats (e.g., Hyper-V) into VMware VMs. It is primarily used for physical-to-virtual (P2V) or virtual-to- virtual (V2V) conversions rather than migrating existing VMware VMs between vSphere environments.Converter involves downtime, as it requires powering off the source VM, cloning it, and then powering it on in the destination environment. For 5000 VMs, this process would be extremely time-consuming, lack automation for large-scale migrations, and fail to meet the requirement of minimizing downtime, rendering it an impractical choice.D: Cross vCenter vMotion:Cross vCenter vMotion extends vMotion's capabilities to migrate VMs between different vCenter Server instances, even across data centers, with zero downtime. While this feature is powerful and could theoretically be used to move VMs to a new VCF environment, it requires both environments to be linked within the same Enhanced Linked Mode configuration and assumes compatible vSphere versions. For 5000 VMs, Cross vCenter vMotion lacks the bulk migration and automation capabilities offered by HCX, requiring significant manual effort to orchestrate the migration. Additionally, it does not provide network extension or the same level of integration with VCF's architecture as HCX.Why VMware HCX is the Best Choice:VMware HCX stands out as the recommended solution for this scenario due to its ability to handle large-scale migrations (up to hundreds of VMs concurrently), minimize downtime via live migration, and automate the process through features like network extension and migration scheduling. HCX is explicitly highlighted in VCF 5.2 documentation as a key tool for workload migration, especially for importing existing vSphere environments into VCF (e.g., via the VCF Import Tool, which complements HCX). Its support for both live and scheduled migrations ensures flexibility, while its integration with VCF 5.2's SDDC Manager streamlines management. For a migration of 5000 VMs, HCX's scalability, automation, and minimal downtime capabilities make it the superior choice over the other options.References:VMware Cloud Foundation 5.2 Release Notes (techdocs.broadcom.com)VMware Cloud Foundation Deployment Guide (docs.vmware.com)"Enabling Workload Migrations with VMware Cloud Foundation and VMware HCX" (blogs.vmware.com, May 3, 2022) When migrating 5000 virtual machines (VMs) from an existing vSphere environment to a new VMware Cloud Foundation (VCF) 5.2 infrastructure, the primary goals are to minimize downtime and automate the process as much as possible. VMware Cloud Foundation 5.2 is a full-stack hyper-converged infrastructure (HCI) solution that integrates vSphere, vSAN, NSX, and Aria Suite for a unified private cloud experience.

Given the scale of the migration (5000 VMs) and the requirement to transition from an existing vSphere environment to a new VCF infrastructure, the architect must select a tool that supports large-scale migrations, minimizes downtime, and provides automation capabilities across potentially different environments or data centers.

Let's evaluate each option in detail:

A: VMware HCX:VMware HCX (Hybrid Cloud Extension) is an application mobility platform designed specifically for large-scale workload migrations between vSphere environments, including migrations to VMware Cloud Foundation. HCX is included in VCF Enterprise Edition and provides advanced features such as zero-downtime live migration, bulk migration, and network extension. It automates the creation of hybrid interconnects between source and destination environments, enabling seamless VM mobility without requiring IP address changes (via Layer 2 network extension). HCX supports migrations from older vSphere versions (as early as vSphere 5.1) to the latest versions included in VCF 5.2, making it ideal for brownfield-to- greenfield transitions. For a migration of 5000 VMs, HCX's ability to perform bulk migrations (hundreds of VMs simultaneously) and its high-availability features (e.g., redundant appliances) ensure minimal disruption and efficient automation. HCX also integrates with VCF's SDDC Manager, aligning with the centralized management paradigm of VCF 5.2.

B: vSphere vMotion:vSphere vMotion enables live migration of running VMs from one ESXi host to another within the same vCenter Server instance with zero downtime. While this is an excellent tool for migrations within a single data center or vCenter environment, it is limited to hosts managed by the same vCenter Server.

Migrating VMs to a new VCF infrastructure typically involves a separate vCenter instance (e.g., a new management domain in VCF), which vMotion alone cannot handle. For 5000 VMs, vMotion would require manual intervention for each VM and would not scale efficiently across different environments or data centers, making it unsuitable as the primary tool for this scenario.

C: VMware Converter:VMware Converter is a tool designed to convert physical machines or other virtual formats (e.g., Hyper-V) into VMware VMs. It is primarily used for physical-to-virtual (P2V) or virtual-to- virtual (V2V) conversions rather than migrating existing VMware VMs between vSphere environments.

Converter involves downtime, as it requires powering off the source VM, cloning it, and then powering it on in the destination environment. For 5000 VMs, this process would be extremely time-consuming, lack automation for large-scale migrations, and fail to meet the requirement of minimizing downtime, rendering it an impractical choice.

D: Cross vCenter vMotion:Cross vCenter vMotion extends vMotion's capabilities to migrate VMs between different vCenter Server instances, even across data centers, with zero downtime. While this feature is powerful and could theoretically be used to move VMs to a new VCF environment, it requires both environments to be linked within the same Enhanced Linked Mode configuration and assumes compatible vSphere versions. For 5000 VMs, Cross vCenter vMotion lacks the bulk migration and automation capabilities offered by HCX, requiring significant manual effort to orchestrate the migration. Additionally, it does not provide network extension or the same level of integration with VCF's architecture as HCX.

Why VMware HCX is the Best Choice:VMware HCX stands out as the recommended solution for this scenario due to its ability to handle large-scale migrations (up to hundreds of VMs concurrently), minimize downtime via live migration, and automate the process through features like network extension and migration scheduling. HCX is explicitly highlighted in VCF 5.2 documentation as a key tool for workload migration, especially for importing existing vSphere environments into VCF (e.g., via the VCF Import Tool, which complements HCX). Its support for both live and scheduled migrations ensures flexibility, while its integration with VCF 5.2's SDDC Manager streamlines management. For a migration of 5000 VMs, HCX's scalability, automation, and minimal downtime capabilities make it the superior choice over the other options.

References:

VMware Cloud Foundation 5.2 Release Notes (techdocs.broadcom.com)

VMware Cloud Foundation Deployment Guide (docs.vmware.com)

"Enabling Workload Migrations with VMware Cloud Foundation and VMware HCX" (blogs.vmware.com, May 3, 2022)

Question 3

Due to limited budget and hardware, an administrator is constrained to a VMware Cloud Foundation (VCF) consolidated architecture of seven ESXi hosts in a single cluster. An application that consists of two virtual machines hosted on this infrastructure requires minimal disruption to storage I/O during business hours.

Which two options would be most effective in mitigating this risk without reducing availability? (Choose two.)

- Apply 100% CPU and memory reservations on these virtual machines

- Implement FTT=1 Mirror for this application virtual machine

- Replace the vSAN shared storage exclusively with an All-Flash Fibre Channel shared storage solution

- Perform all host maintenance operations outside of business hours

- Enable fully automatic Distributed Resource Scheduling (DRS) policies on the cluster

Correct answer: B, D

Explanation:

The scenario involves a VCF consolidated architecture with seven ESXi hosts in a single cluster, likely using vSAN as the default storage (standard in VCF consolidated deployments unless specified otherwise). The goal is to minimize storage I/O disruption for an application's two VMs during business hours while maintaining availability, all within budget and hardware constraints.Requirement Analysis:Minimal disruption to storage I/O:Storage I/O disruptions typically occur during vSAN resyncs, host maintenance, or resource contention.No reduction in availability:Solutions must not compromise the cluster's ability to keep VMs running and accessible.Budget/hardware constraints:Options requiring new hardware purchases are infeasible.Option Analysis:A: Apply 100% CPU and memory reservations on these virtual machines:Setting 100% CPU and memory reservations ensures these VMs get their full allocated resources, preventing contention with other VMs. However, this primarily addresses compute resource contention, not storage I/O disruptions. Storage I/O is managed by vSAN (or another shared storage), and reservations do not directly influence disk latency, resync operations, or I/O performance during maintenance. The VMware Cloud Foundation 5.2 Administration Guide notes that reservations are for CPU/memory QoS, not storage I/O stability. This option does not effectively mitigate the risk and is incorrect.B: Implement FTT=1 Mirror for this application virtual machine:FTT (Failures to Tolerate) = 1 with a mirroring policy (RAID-1) in vSAN ensures that each VM's data is replicated across at least two hosts, providing fault tolerance. During business hours, if a host fails or enters maintenance, vSAN maintains data availability without immediate resync (since data is already mirrored), minimizing I/O disruption. Without this policy (e.g., FTT=0), a host failure could force a rebuild, impacting I/O. The VCF Design Guide recommends FTT=1 for critical applications to balance availability and performance. This option leverages existing hardware, maintains availability, and reduces I/O disruption risk, making it correct.C: Replace the vSAN shared storage exclusively with an All-Flash Fibre Channel shared storage solution:Switching to All-Flash Fibre Channel could improve I/O performance and potentially reduce disruption (e.g., faster rebuilds), but it requires purchasing new hardware (Fibre Channel HBAs, switches, and storage arrays), which violates the budget constraint. Additionally, transitioning from vSAN (integral to VCF) to external storage in a consolidated architecture is unsupported without significant redesign, as per the VCF5.2 Release Notes. This option is impractical and incorrect.D: Perform all host maintenance operations outside of business hours:Host maintenance (e.g., patching, upgrades) in vSAN clusters triggers data resyncs as VMs and data are evacuated, potentially disrupting storage I/O during business hours. Scheduling maintenance outside business hours avoids this, ensuring I/O stability when the application is in use. This leverages DRS and vMotion (standard in VCF) to move VMs without downtime, maintaining availability. The VCF Administration Guide recommends off-peak maintenance to minimize impact, making this a cost-effective, availability-preserving solution. This option is correct.E: Enable fully automatic Distributed Resource Scheduling (DRS) policies on the cluster:Fully automated DRS balances VM placement and migrates VMs to optimize resource usage. While this improves compute efficiency and can reduce contention, it does not directly mitigate storage I/O disruptions. DRS migrations can even temporarily increase I/O (e.g., during vMotion), and vSAN resyncs (triggered by maintenance or failures) are unaffected by DRS. The vSphere Resource Management Guide confirms DRS focuses on CPU/memory, not storage I/O. This option is not the most effective here and is incorrect.Conclusion:The two most effective options areImplement FTT=1 Mirror for this application virtual machine (B)andPerform all host maintenance operations outside of business hours (D). These ensure storage redundancy and schedule disruptive operations outside critical times, maintaining availability without additional hardware.References:VMware Cloud Foundation 5.2 Design Guide (Section: vSAN Policies)VMware Cloud Foundation 5.2 Administration Guide (Section: Maintenance Planning) VMware vSphere 8.0 Update 3 Resource Management Guide (Section: DRS and Reservations) VMware Cloud Foundation 5.2 Release Notes (Section: Consolidated Architecture) The scenario involves a VCF consolidated architecture with seven ESXi hosts in a single cluster, likely using vSAN as the default storage (standard in VCF consolidated deployments unless specified otherwise). The goal is to minimize storage I/O disruption for an application's two VMs during business hours while maintaining availability, all within budget and hardware constraints.

Requirement Analysis:

Minimal disruption to storage I/O:Storage I/O disruptions typically occur during vSAN resyncs, host maintenance, or resource contention.

No reduction in availability:Solutions must not compromise the cluster's ability to keep VMs running and accessible.

Budget/hardware constraints:Options requiring new hardware purchases are infeasible.

Option Analysis:

A: Apply 100% CPU and memory reservations on these virtual machines:Setting 100% CPU and memory reservations ensures these VMs get their full allocated resources, preventing contention with other VMs. However, this primarily addresses compute resource contention, not storage I/O disruptions. Storage I

/O is managed by vSAN (or another shared storage), and reservations do not directly influence disk latency, resync operations, or I/O performance during maintenance. The VMware Cloud Foundation 5.2 Administration Guide notes that reservations are for CPU/memory QoS, not storage I/O stability. This option does not effectively mitigate the risk and is incorrect.

B: Implement FTT=1 Mirror for this application virtual machine:FTT (Failures to Tolerate) = 1 with a mirroring policy (RAID-1) in vSAN ensures that each VM's data is replicated across at least two hosts, providing fault tolerance. During business hours, if a host fails or enters maintenance, vSAN maintains data availability without immediate resync (since data is already mirrored), minimizing I/O disruption. Without this policy (e.g., FTT=0), a host failure could force a rebuild, impacting I/O. The VCF Design Guide recommends FTT=1 for critical applications to balance availability and performance. This option leverages existing hardware, maintains availability, and reduces I/O disruption risk, making it correct.

C: Replace the vSAN shared storage exclusively with an All-Flash Fibre Channel shared storage solution:Switching to All-Flash Fibre Channel could improve I/O performance and potentially reduce disruption (e.g., faster rebuilds), but it requires purchasing new hardware (Fibre Channel HBAs, switches, and storage arrays), which violates the budget constraint. Additionally, transitioning from vSAN (integral to VCF) to external storage in a consolidated architecture is unsupported without significant redesign, as per the VCF

5.2 Release Notes. This option is impractical and incorrect.

D: Perform all host maintenance operations outside of business hours:Host maintenance (e.g., patching, upgrades) in vSAN clusters triggers data resyncs as VMs and data are evacuated, potentially disrupting storage I/O during business hours. Scheduling maintenance outside business hours avoids this, ensuring I/O stability when the application is in use. This leverages DRS and vMotion (standard in VCF) to move VMs without downtime, maintaining availability. The VCF Administration Guide recommends off-peak maintenance to minimize impact, making this a cost-effective, availability-preserving solution. This option is correct.

E: Enable fully automatic Distributed Resource Scheduling (DRS) policies on the cluster:Fully automated DRS balances VM placement and migrates VMs to optimize resource usage. While this improves compute efficiency and can reduce contention, it does not directly mitigate storage I/O disruptions. DRS migrations can even temporarily increase I/O (e.g., during vMotion), and vSAN resyncs (triggered by maintenance or failures) are unaffected by DRS. The vSphere Resource Management Guide confirms DRS focuses on CPU/memory, not storage I/O. This option is not the most effective here and is incorrect.

Conclusion:The two most effective options areImplement FTT=1 Mirror for this application virtual machine (B)andPerform all host maintenance operations outside of business hours (D). These ensure storage redundancy and schedule disruptive operations outside critical times, maintaining availability without additional hardware.

References:

VMware Cloud Foundation 5.2 Design Guide (Section: vSAN Policies)

VMware Cloud Foundation 5.2 Administration Guide (Section: Maintenance Planning) VMware vSphere 8.0 Update 3 Resource Management Guide (Section: DRS and Reservations) VMware Cloud Foundation 5.2 Release Notes (Section: Consolidated Architecture)

Question 4

Given a scenario, what is the best approach for monitoring VMware Cloud Foundation health and performance?

Response:

- Using a custom dashboard to monitor each individual VCF component manually

- Relying on VMware Cloud Foundation native tools for monitoring and alerting

- Implementing VMware vRealize Operations for health, performance, and capacity management

- Setting up SNMP-based monitoring to capture basic infrastructure data

Correct answer: C

Question 5

An architect has come up with a list of design decisions after a workshop with the business stakeholders.

Which design decision describes a logical design decision?

- End users will interact with application server hosted in Site A

- Both sites A and B will have a /16 dedicated network subnets.

- End users should always experience instantaneous application response

- Asynchronous storage replication that satisfies a recovery point objective (RPO) of 15min between site A and B

Correct answer: D

Question 6

During the requirements gathering workshop for a new VMware Cloud Foundation (VCF)-based Private Cloud solution, the customer states that the solution must:

* Provide a single interface for monitoring all components of the solution.

* Minimize the effort required to maintain the solution to N-1 software versions.

When creating the design document, under which design quality should the architect classify these stated requirements?

- Performance

- Recoverability

- Availability

- Manageability

Correct answer: D

Question 7

When creating a VMware Cloud Foundation logical design for a network infrastructure, which two elements must be included?

(Choose two)

Response:

- The choice of physical network cables used in the data center

- Configuration of firewall rules and load balancers

- Logical network segments such as VLANs

- The physical location of network switches

Correct answer: B, C

Question 8

What should be included in a conceptual model to ensure it reflects the scalability of the VMware Cloud Foundation solution?

(Choose two)

Response:

- The types of servers to be used for deployment

- The specific models and configurations of network switches

- Logical groupings of compute, storage, and networking resources

- The ability to scale storage and compute independently

Correct answer: C, D

Question 9

Which of the following is part of developing a risk mitigation strategy in IT architecture?

Response:

- Designing the system without assessing potential risks.

- Focusing only on the cost and ignoring technical risks.

- Identifying risks and planning strategies to reduce their impact.

- Ignoring all potential risks to speed up the design process.

Correct answer: C

Question 10

Which design decision should be considered when creating a VMware Cloud Foundation physical design for a vSAN configuration?

Response:

- Defining the compute resource pool size

- Choosing the optimal number of storage tiers based on workload requirements

- Deciding the physical network layout and VLAN tagging for vSAN traffic

- Selecting the management platform for physical devices

Correct answer: C

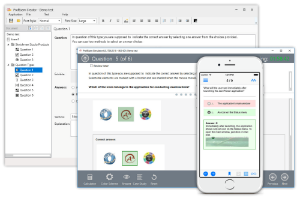

HOW TO OPEN VCE FILES

Use VCE Exam Simulator to open VCE files

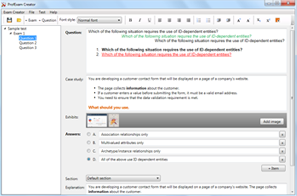

HOW TO OPEN VCEX FILES

Use ProfExam Simulator to open VCEX files

ProfExam at a 20% markdown

You have the opportunity to purchase ProfExam at a 20% reduced price

Get Now!